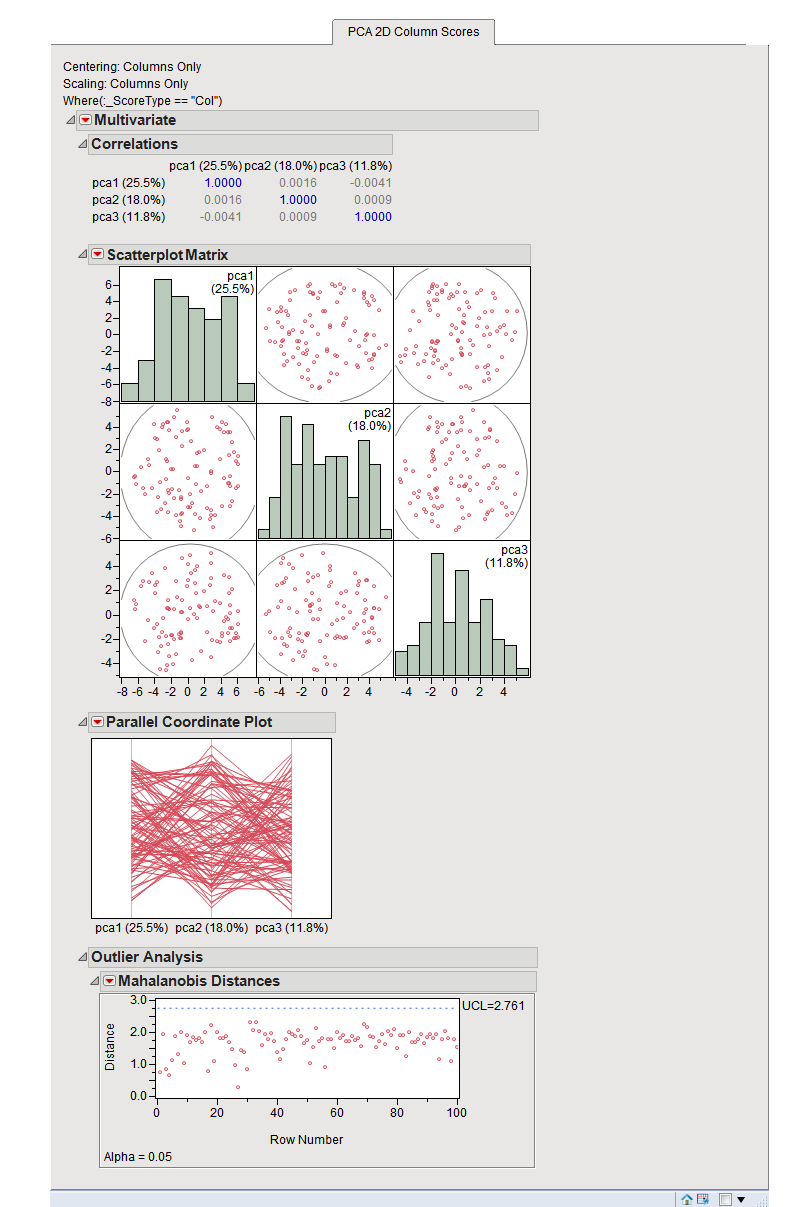

How do we set \textstyle k i.e., how many PCA components should we retain? In our simple 2 dimensional example, it seemed natural to retain 1 out of the 2 components, but for higher dimensional data, this decision is less trivial. \in \Re^ representation can be an extremely good approximation to the original, and using PCA this way can significantly speed up your algorithm while introducing very little approximation error. 31.3 If a Tender is not substantially responsive to the requirements of tendering document. Rough er code Smooth endoplasmic reticulum hallways: Factories have hallways through which information from the office travels to the workers in all departments. Because this is a PCA biplot with no dif- ferential weights on the variables. Get transformed data xtransformed pd.DataFrame(pca.transform(x), columns np.arange. Concretely, suppose we are training on 16x16 grayscale image patches. met without any material deviation or reservation, or omission. PCA of the column profiles in the rows of Dc. If a feature A assumes values in the range of 010000 with a Standard Deviation of say 200.

Then the input will be somewhat redundant, because the values of adjacent pixels in an image are highly correlated. Suppose you are training your algorithm on images. More importantly, understanding PCA will enable us to later implement whitening, which is an important pre-processing step for many algorithms. Principal Components Analysis (PCA) is a dimensionality reduction algorithm that can be used to significantly speed up your unsupervised feature learning algorithm.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed